The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

Holograms 240 Issue: Shared Room Offset in Real World

I'm creating an app using the sharing service from holograms 240. Everything works as it is supposed to: the two users join the room, and both can see the cube that represents the other's head in the proper position around the central hologram (To simplify things I grabbed the remoteheadmanager script from holotoolkit-unity to use instead of the remoteplayermanager. It seems to work fine, the head movement matches perfectly).

However, the room itself is in different locations for both users, at an offset approximately equal to the distance between the two hololenses. This makes the cubes that represent the heads not match up with the actual hololens device in the real world.

From what I understand reading other questions here, the initial hololens sends a world anchor to any connecting hololens. The receiving hololens then attempts to place the world anchor according to what it can see. I'm not sure why the second hololens in my case cannot accurately place the world anchor, given that both hololenses are in the same room.

Am I missing something? Or is this how the tutorial is supposed to work?

Best Answer

-

Options

HoloSheep

mod

HoloSheep

mod

@HemantVirkar it is important that both HoloLens devices have a good and fairly accurate and detailed spatial map of the room so that the tracking points can lock onto the room in the same way.

You might want to check the 3D View in the Device Portal to show the meshes that each device sees to see if they have a similar and fairly complete mesh built for the room. If not, perhaps just exploring the room from the Shell and air tapping on surfaces to build up the spatial maps on both devices before starting the shared experience might result in better alignment.

Windows Holographic User Group Redmond

WinHUGR.org - - - - - - - - - - - - - - - - - - @WinHUGR

WinHUGR YouTube Channel -- live streamed meetings5

Answers

@HemantVirkar it is important that both HoloLens devices have a good and fairly accurate and detailed spatial map of the room so that the tracking points can lock onto the room in the same way.

You might want to check the 3D View in the Device Portal to show the meshes that each device sees to see if they have a similar and fairly complete mesh built for the room. If not, perhaps just exploring the room from the Shell and air tapping on surfaces to build up the spatial maps on both devices before starting the shared experience might result in better alignment.

Windows Holographic User Group Redmond

WinHUGR.org - - - - - - - - - - - - - - - - - - @WinHUGR

WinHUGR YouTube Channel -- live streamed meetings

Hello, we've had the same problem and updating system to current version solved the problem

My current firmware version: 10.0.14393.0

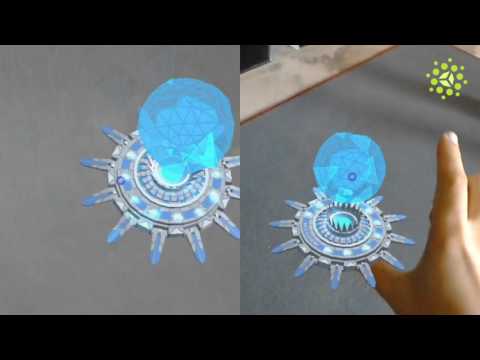

here is video of what we managed to get

https://www.youtube.com/watch?v=C7LGPQJcVEM&feature=youtu.be&t=19

https://www.youtube.com/watch?v=C7LGPQJcVEM&feature=youtu.be&t=19

I have the same problem although I remap the room carefully for the two devices. Look into the 3D views in portal device, they are similar. My current firmware version: 10.0.14393.0

Any help is appreciated.

Thank

I'm having the same problem as described here. The sharing and loading of anchors works, but there is an offset to where that anchor exists.

The strange thing is that the spacial anchors when viewed in the 3D View of each hololens looks accurate and in the correct location in both.

However the head tracking is off by about .5 feet and the placed cube I'm placing into the scene is off by the same amount in one of the hololens.

I'm starting the second hololens experience remotely via the portal while wearing the first one.

Both hololens are up to date as of 4/1/2017

10.0.14393.953

If anyone has any suggestions on how to fix the offsets it would be much appreciated.

I run into what I think are similar issues. The spatial anchor appears in roughly the correct spot after a bit of time moving the two devices around. But the orientation is flipped 180 degrees around the y-axis. Careful remapping of the space on the two devices makes no difference, neither does starting the application from the same location & vantage point.

Any of you guys figure this one out?

One thing that could happen is your main camera could have a transform applied to it. But if that's the issue then starting both instances of the application from the same position would at least produce the same wrong result. However, even a small delta in rotation between the two devices could have a significant impact.

===

This post provided as-is with no warranties and confers no rights. Using information provided is done at own risk.

(Daddy, what does 'now formatting drive C:' mean?)

Importexportanchormanager sends its localPosition and localRotation which need to be the position and rotation of the placed anchor, not an empty game object.