Hello everyone.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

Options

Access the RGB camera (or Locatable Camera) at 30fps?

Does anyone know how to access the RGB camera (or Locatable Camera) at 30 frames per second in Unity? I've already tried these approaches without success:

- Call PhotoCapture.TakePhotoAsync on each Update() frame.

- Use Unity's WebCamTexture.

I didn't measure the framerate for the first one but it was probably around 5-10fps. Using WebCamTexture with an 896x504 image, I was able to get around 20fps. I can think of only 3 other options right now:

- Call PhotoCapture.TakePhotoAsync with InvokeRepeating (similar to this blog post), but I'm assuming that this will still be too slow.

- Bypass Unity's web camera functions and try to directly access the camera, perhaps by using one of the Windows Universal Samples.

- Wait until Unity's wrapper for LocatableCamera has a function to do this. Does anyone know if Unity is planning to implement this?

Notes:

- By just calling play() on a WebCamTexture, the framerate drops from 60fps to 20fps! That is, I'm not doing any extra processing with the WebCamTexture; I'm only calling play().

- A downside of using WebCamTexture is that it doesn't give you the position or rotation of the camera for that frame, unlike using LocatableCamera. I suppose a possible remedy is to find the rigid transformation between Camera.main.transform and the transform given by LocatableCamera.

Tagged:

1

Answers

Have you tried using VideoCapture instead of PhotoCapture? That should run ~30fps.

@ahillier VideoCapture doesn't allow me to access the frames but only saves it to a disk. I want to access the raw image data in code at 30fps, not just save it to a disk.

Hi All,

I have the same problem, i tried some approaches but the fps are too low.

And i never had more of 30fps, this is normal?

@ahillier and other moderators, do you have any contacts with the folks at Unity? Would you be able to see if they have this functionality planned for implementation? I wouldn't want to duplicate unnecessary code if it's planned to be in a future Unity release.

One update: I tried the example here https://github.com/Microsoft/Windows-universal-samples/tree/master/Samples/CameraGetPreviewFrame and it runs as 2D rectangular app in HoloLens, and the image shown gets updated very quickly, at least 30fps. However, they're sending the image to a UI element called CaptureElement. I'm not sure if we'd be able to access this at 30fps or not. Has anyone else had experience with this? I'll let you all know if I figure out anything more.

Update HoloLens to Windows 10 14393

MediaCapture + MediaFrameSource + MediaFrameReader

https://msdn.microsoft.com/en-us/library/windows/apps/xaml/windows.media.capture.frames.mediaframereader.tryacquirelatestframe.aspx

Thanks, @Huanlian . I just found these two helpful links as well:

Process media frames with MediaFrameReader and CameraFrames. Note that the first link mentions, "This feature is designed to be used by apps that perform real-time processing of media frames, such as augmented reality and depth-aware camera apps."

Unfortunately, I just tried the CameraFrames C# sample on HoloLens and it's flickering and running kind of slow. The CameraGetPreviewFrame sample works very fast with no flickering on the HoloLens.

I have yet to try this in a Unity app with Spatial Mapping enabled. @Waterine said here that the frame rate drops when mesh processing for spatial mapping is enabled.

Hi,

One question about Hololens spatial mapping with Unity? When the environment changes, may I get raw RGB image data with its corresponding pose onUpdate callback functions? I mean is it possible to get a synchronized RGB image and pose, while Hololens is doing spatial mapping.

Thanks

@holoben said:

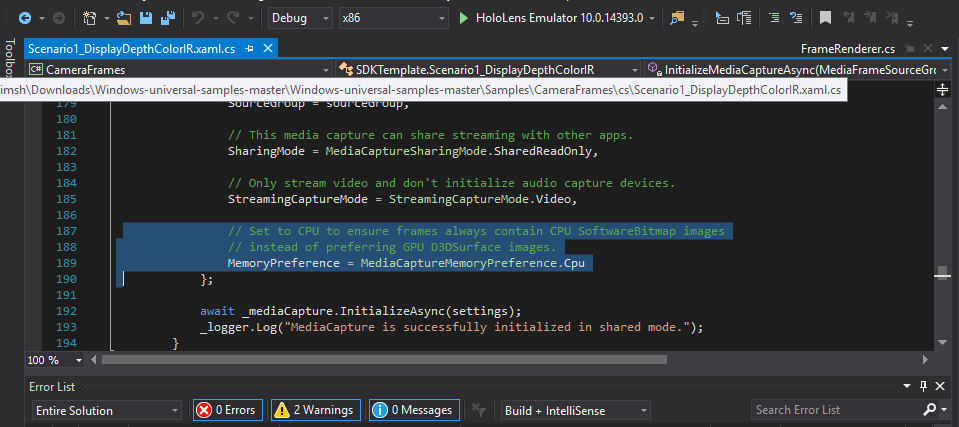

In the code for CameraFrames C# is the comment

// Set to CPU to ensure frames always contain CPU SoftwareBitmap images

// instead of preferring GPU D3DSurface images.

MemoryPreference =MediaCaptureMemoryPreference.Cpu

So IMHO there you have the reason CameraGetPreviewFrame uses GPU D3DSurface images. CameraFrames uses Software Rendering to get infrared detail

I don't know if you can change this function without causing problems. Screenshot below shows the lines

Hi, I need to make a process on each frame to track a laser pointer with my Hololens. did somebody manage to get the video capture at a decent framerate?

We just created this open source project called CameraStream to address the issue of getting the locatable camera as a video stream in Unity.

We made the API very similar to Unity's VideoCapture class so that developers would already be familiar with how to implement it.

Hopefully it will help people as we also found this feature oddly missing from the Unity/HoloLens API.

There is still a bit of work to be done on it to cover more use cases, so of course it would be great to have some Windows/HoloLens experts look at it!

Eric