Hello everyone.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

Options

GameObject Limit on performance

Hey all,

We are building an app that renders close to 3000 gameObjects (spheres) as a scatter plot.

When we are looking at dense areas of the scatter plot, the frame rate drops to an unbearable level. Using the profiler, we see that the framerate is at 3-8 fps. We reduce the size to 200 spheres, and it is much better. Is there anyway to improve our performance. If not, what is the actual limit on the hololens.

We have already done the usual (setting quality to fastest, release instead of debug, disabling shadows, holotoolkit-shaders)

Wondering if there's any other possibilities to get the FPS up.

Tagged:

0

Answers

spheres are super expensive to render. It takes a lot of polygons to make a sphere. Have you tried cubes?

Did try cubes. It didn't seem to make a significant improvement. Is there any techniques to make this easier on the Hololens to render Ultimately we want a dynamic scatter plot where each point can be selected. Which is why we chose to make everything a gameobject.

You can also use occlusion curling. https://docs.unity3d.com/Manual/OcclusionCulling.html

http://www.redsprocketstudio.com/

Developer | Check out my new project blog

hey @AmerAmer. Thanks a lot for the response. Can you elaborate on the instancing. So currently, i start with one sphere and at runtime create the 2000 clones of the sphere. How would i go about instancing. I tried following this: https://docs.unity3d.com/Manual/GPUInstancing.html

However, i don't think it's working.

How many draw calls do you have? If you are only drawing the spheres, there should be one draw call. If you have 2000 then its not working correctly.

Also make sure single pass stereo rendering is turned on in your player settings (under the VR section I think)

https://blogs.unity3d.com/2016/07/28/unity-5-4-is-out-heres-whats-in-it/

The blog above shows what happens with instancing.

http://www.redsprocketstudio.com/

Developer | Check out my new project blog

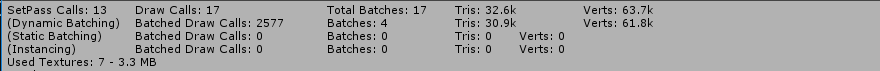

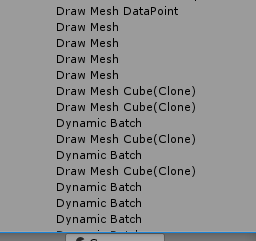

@AmerAmer Yes, I have around 2000 draw calls. I've attached my frame debugger and my stats window. As of recent here is what I have. I have switched to cubes (assuming it's easier to render). My original cube uses the HoloToolkit/Unlit Configurable shader. I went in and made it configurable for instancing (ie. adding the lines that were in the GPU instancing manual from my previous post). When i clone my cube, all of the clones have the same shader.

This is the exact code i use for that.

MeshRenderer gameObjectRenderer = clone.GetComponent<MeshRenderer>(); //Material cloneMaterial = new Material(Shader.Find("Instanced/NewInstancedSurfaceShader")); Material cloneMaterial = new Material(Shader.Find("HoloToolkit/Unlit Configurable")); cloneMaterial.color = cloneColor; gameObjectRenderer.material = cloneMaterial;I have single pass rendering checked as you mentioned.

This is puzzling: Now I want these to be dynamic, but i tried making the original parent cube static. It makes all the clones static also, and changes the mesh to Combined Mesh at runtime. However I still have 2000 draw call. I thought static instancing was automatic if the objects were all same material/shader.

Are you using Unity free? IF so batching is not supported. if not take a look at other suggestion below, although they are related to batching and instancing.

Few things:

Check out this blog, he has good info on static batching, dynamic batching and instancing.

https://developer3.oculus.com/blog/squeezing-performance-out-of-your-unity-gear-vr-game/

maybe somebody else has some ideas but the blog above has some good suggestions as well.

http://www.redsprocketstudio.com/

Developer | Check out my new project blog

@AmerAmer

Got it, so static batching isn't available. But dynamic batching is free so that should work. Spent yesterday figuring out how to get dynamic batching working. The first problem was that everytime i made a clone, it was creating an instance of the material which prevented batching. The reason this happened is interesting. The holotoolkit scripts (things like gaze responder) access renderer.material. Any reference to .material creates a new instance of it. So i had to go into all the scripts and change them to .sharedmaterial. Changed the lighting to vertex-lit and voila batching starts to work. Here's the stats I have. It's much better than no dynamic batching but i still think i can do better. It runs at 15 fps on the hololens. But it seems to have broken some things like my cursor. Oh well.

From what i understand Instancing is a step above dynamic batching. I'm not very clear on how to get instancing to work though. I would love to get near 50 fps.

Hmmm, interesting. Is .sharedMaterial always available? If so, you might raise an issue on the HTK to change all instances to use that if it will help with performance

So, I'm not very familiar with .sharedMaterial. But i think it should always be available whenever you instantiate an existing object. If you don't mess with the material, it automatically shares material. But using sharedmaterial might break other use-cases.

Again, i'm not very familiar with this. Perhaps someone with understanding about dynamic batching can shed insight. It's a shame the unity documentation on all this is somewhat sloppy.

Very interesting. I created a new issue for this in the toolkit. Hopefully someone can review and comment.

https://github.com/Microsoft/HoloToolkit-Unity/issues/474

Our Holographic world is here

RoadToHolo.com WikiHolo.net @jbienz

I work in Developer Experiences at Microsoft. My posts are based on my own experience and don't represent Microsoft or HoloLens.

Be aware that improper use of renderer.material will cause memory leaks in your application.

Stephen Hodgson

Microsoft HoloLens Agency Readiness Program

Virtual Solutions Developer at Saab

HoloToolkit-Unity Moderator

Just be aware that using the shared material will change the color for all of the objects. So if you're trying to highlight just one of them, and update the color, then they all will highlight. But I believe this does solve the dynamic batching by making all the renderers use the same material instance.

Stephen Hodgson

Microsoft HoloLens Agency Readiness Program

Virtual Solutions Developer at Saab

HoloToolkit-Unity Moderator

This might be what you're looking for?

http://thomasmountainborn.com/2016/05/25/materialpropertyblocks/

Stephen Hodgson

Microsoft HoloLens Agency Readiness Program

Virtual Solutions Developer at Saab

HoloToolkit-Unity Moderator

A side note, as far as I know the only scripts inside the HoloToolkit (not in the tests folders) that actually change the .material instance is SurfacePlane.cs, Utils.cs, DirectionIndicator.cs, and ManualHandControl.cs.

Any of the other scripts in the Test folders are purely for examples only and most likely don't represent each developers needs and are not production ready, so go ahead and make your own implementation that fits your projects needs.

Stephen Hodgson

Microsoft HoloLens Agency Readiness Program

Virtual Solutions Developer at Saab

HoloToolkit-Unity Moderator

Does anyone know if GPU instancing is available on the Hololens?

Stephen Hodgson

Microsoft HoloLens Agency Readiness Program

Virtual Solutions Developer at Saab

HoloToolkit-Unity Moderator

Yes it is. You have to supply your transformations in a separate buffer as part of the binding but its done in one call. Your device has to run feature level 11_1.

I don't know what unity is doing wrong but the device supports it. My current project developed in DirectX, not unity, is running a massive amount of meshes at a fairly stable frame rate.

http://www.redsprocketstudio.com/

Developer | Check out my new project blog

@stephenhodgson

You are correct that changing color using .shared material changes all of the colors. However there is a work-around that i got working yesterday. You can use vertex coloring and have different colors for each object. You need an appropriate shader to get vertex coloring, but i simply added in the vertrex coloring parameters i needed to an existing shader to get it working.

You can use the Mesh.colors

property to change the color independently.

There's another Unity page somewhere about making a shader compatible with vertex coloring. Can't find it currently.