Hello everyone.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

The Mixed Reality Forums here are no longer being used or maintained.

There are a few other places we would like to direct you to for support, both from Microsoft and from the community.

The first way we want to connect with you is our mixed reality developer program, which you can sign up for at https://aka.ms/IWantMR.

For technical questions, please use Stack Overflow, and tag your questions using either hololens or windows-mixed-reality.

If you want to join in discussions, please do so in the HoloDevelopers Slack, which you can join by going to https://aka.ms/holodevelopers, or in our Microsoft Tech Communities forums at https://techcommunity.microsoft.com/t5/mixed-reality/ct-p/MicrosoftMixedReality.

And always feel free to hit us up on Twitter @MxdRealityDev.

Options

Access to raw depth data stream

CurvSurf

✭✭

CurvSurf

✭✭

Is the raw depth data (point cloud with xyz-coordinates) accessible for application?

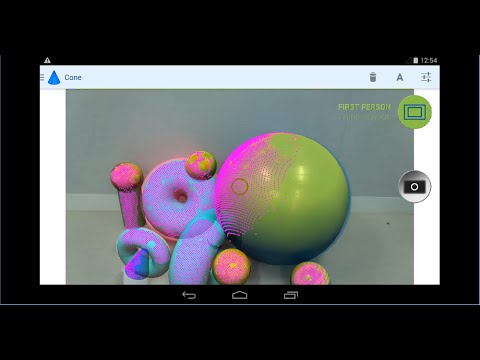

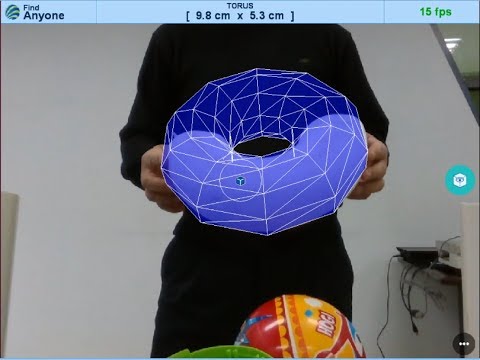

We like to apply our middleware for real time object detection, recognition, and measurement also to HoloLens in the same manner as shown below: https://www.youtube.com/watch?v=HGwojt6Gqrc

https://www.youtube.com/watch?v=HGwojt6Gqrc

Thank you

Tagged:

0

Best Answers

-

Options

Bryan

✭✭✭

It won't be the RAW sensor data stream, but check out the DirectX documentation on Spatial Mapping:

Bryan

✭✭✭

It won't be the RAW sensor data stream, but check out the DirectX documentation on Spatial Mapping:

https://dev.windows.com/en-us/holographic/spatial_mapping_in_directx

Which will give you access to the Coordinates in the SpatialSurfaceMesh:

https://msdn.microsoft.com/en-us/library/windows/apps/windows.perception.spatial.surfaces.spatialsurfacemesh.aspx

Hopefully that'll give you enough data for your middleware to do its magic.1 -

Options

Jesse_McCulloch

admin

Jesse_McCulloch

admin

So, an update for you all. In late February, at the HoloDevelopers Summit at Microsoft, some of us received pre-release builds of RS4 on our HoloLens, which includes a feature called Research Mode. We don't have any documentation on it yet, but research mode will allow access to the raw data. The caveat there is that you can't publish apps to the store using the API's that are exposed by research mode, and in the future there may be breaking changes to those API's.

5

Answers

https://dev.windows.com/en-us/holographic/spatial_mapping_in_directx

Which will give you access to the Coordinates in the SpatialSurfaceMesh:

https://msdn.microsoft.com/en-us/library/windows/apps/windows.perception.spatial.surfaces.spatialsurfacemesh.aspx

Hopefully that'll give you enough data for your middleware to do its magic.

As you pointed, 'SpatialSurfaceMesh' class provides all necessary information what we need. Thank you!

We expect max 1 frame latency of mesh data thanks to the powerful GPU HPU pipeline. We hope our middleware causes additional only 0~2 frame latency by vertex processing till displaying the resultant object geometry.

Thanks

But aren't those completely different beasts? If I want raw depth data - then I would want it at the frame rate a depth camera can deliver. If I understand it right the spatial meshes (integration) will be at significantly lower frame rates?

I would really like a "hacker mode" that is unsupported (meaning apps developed there can never end up in the store), that gives people full access to the hardware. I understand (I believe at least) that you want you want to be able to change the spatial mapping technology, still - for artists, hackers, other creative folks - there is so much potential in raw sensor access... it would be great to enable that even if the results are not proper allowable HoloLens apps

We will examine and compare between the 'Cached Spatial Mapping' and the 'Continuous Spatial Mapping' modes.

'Cached Spatial Mapping' will be proper for geometric construction of stationary objects. On the other hand, 'Continuous Spatial Mapping' for tracking of moving objects.

From our experience with Intel RealSense, the continuous meshing process inside HoloLens will be fast enough to deliver vertexes for real-time object tracking.

Hi. I am checking on this thread and wondering if you had any success using the spatial mapping mesh from DirectX. Can Hololens team let us know as to whether their will be a similar Unity capability in the near future?

@YBA

https://www.youtube.com/watch?v=C7mLH_5QzvU

https://www.youtube.com/watch?v=C7mLH_5QzvU

First last week, we were successful in applying our middleware to spatial mapping data from HoloLens:

But the raw depth data are indispensable:

https://www.youtube.com/watch?v=8ruiudETvuY

https://www.youtube.com/watch?v=8ruiudETvuY

Following up on tihs. Hey Microsoft! some of us could do some pretty awesome things if you gave us access to the raw sensor data. Please?

@brainsandspace

We CurvSurf eager to apply the middleware FindSurface to the raw depth data stream of HoloLens, as to those of Google Tango or Intel RealSense. But, we CurvSurf expect that the HPU architecture of HoloLens are not designed or not prepared or not yet ready to be released. We may have to wait the next version of HoloLens.

I don't think MS wants anyone to have access to the raw data, they might make a product which is too 'good', they want that data kept for their inside studio people. Basically, were not 'good enough', 'smart enough' and gosh darn it, MS just don't like us.

@CurvSurf

Have you tried to measure box dimension by using HoloLens? If so, what's the accuracy can it achieve?

@NiBao

HoloLens is not proper for measuring box, because Spatial Mapping trims convex surfaces off and fills concave surfaces. A box smaller than 2 ft width/height/length will be mapped as a small mound.

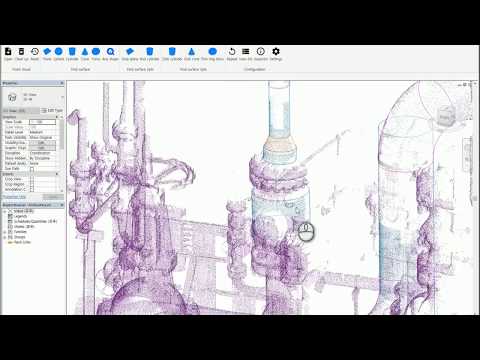

@CurvSurf

Thanks for your information. I found there are some videos showing construction workers use HoloLens for measurement on job site but I wondering if the accuracy is good enough? As you mentioned raw depth data was not available, we may not able to have a very accurate measurements (say +/- 5mm) of MEP objects, doors or windows, etc., right?

@NiBao

You are correct. In the videos you viewed, some large planes (e.g. floor or wall) might be used as datums, on which 3-D CAD models or virtual objects (holograms) could be placed.

Current AR demos (showing an interaction between real and virtual objects) are based on plane fitting to floor, table top, street, sidewalk, etc., on which virtual objects are being placed and moved.

If we can measure and extract not only planes but also spheres, cylinders, cones, tori, and boxes as geometries of real objects, there will be much more applications of AR.

3-D Augmented Reality - Microsoft HoloLens

https://youtu.be/_Cao7bVZAMg

https://youtu.be/_Cao7bVZAMg

3-D Augmented Reality - Google Tango

https://youtu.be/QHp5grEi1NE

https://youtu.be/QHp5grEi1NE

3-D Augmented Reality - Apple ARKit

https://youtu.be/FzdrxtPQzfA

https://youtu.be/FzdrxtPQzfA

Real Time 3-D Object Recognition

https://youtu.be/6tn4rMeIBaw

https://youtu.be/6tn4rMeIBaw

FindSurface SDK - Revit Plugin Demo

https://youtu.be/ThpXAFJEBJ4

https://youtu.be/ThpXAFJEBJ4

@CurvSurf

Thanks so much for you comments. As I am a HoLoLens newbie, may I ask some simple questions? 1. Even though we can extract the two feature points from the RGB image, we still cannot know the exactly dimension (error less than +/- 5mm at 1 meter range), right? Because we have no way to get the point cloud coordinates of these two points through HoLoLens APIs. 2. Can we find it by use stereo vision of the front cameras?

@NiBao

Using raycasts may help you. A gazing ray hits the Spatial Mapping meshes of your target object surfaces (API is available). The hit point (i.e. measurement point) substitutes an unknown real object point.

So, an update for you all. In late February, at the HoloDevelopers Summit at Microsoft, some of us received pre-release builds of RS4 on our HoloLens, which includes a feature called Research Mode. We don't have any documentation on it yet, but research mode will allow access to the raw data. The caveat there is that you can't publish apps to the store using the API's that are exposed by research mode, and in the future there may be breaking changes to those API's.

@CurvSurf

Thanks for your suggestions! But even though the 3 dimensional coordinates of hit point could be found by intersecting gazing ray with spatial mapping mesh (not raw depth data), we still cannot guarantee the accuracy. Right? Moreover, in cases spatial mapping mesh is not available at the point of interest, we have no way for the measurement... Seems I need "research mode" as Jesse_McCulloch mentioned to tackle this problem?

@Jesse_McCulloch

Thanks for info. How can I get an access to Research Mode?

And, can you as moderator delete the above 6 duplicated posts?

@NiBao

I agree.

Spatial Mapping envelops a smaller volume than the actual object surface because it truncates convex details.

Hey @CurvSurf & @NiBao ,

So I cleaned up the duplicate posts. Those ended up in the spam filter, which I pushed through a lot of stuff from yesterday. Sorry about that.

As for Research Mode, you will need to wait for the next build of the OS to come to the HoloLens. In the current OS build, there is an option to enroll your device in the Insiders Program. This will get you the build sooner than if it's not enrolled. Microsoft has not given out a timeline for when they are pushing the insider build out, but the hope is in the March timeframe, however there are lots of things that could affect that timeline.